As a follow on to the presentation I gave at the ATA this year, which was very well attended (photo courtesy of Wesley Budd) I thought it might be useful if I highlighted some of the things covered on the day.

The session itself was a little ad-hoc, deliberately so, because I wanted to make sure the content was relevant to the attendees, and because it wasn’t a getting started session. So I covered quite a lot of material, that judging by the number of people furiously scribbling down in their notebooks could use a little follow up!

Some of the things I covered I have written about in the past so a simple link might be appropriate here… but if you read this and think I missed something then please drop a comment in and I’ll add it..! I may not do this in the same order… I’ve already forgotten what I covered (my excuse is jetlag!).

Contents

Project Settings vs Tools Options

I started with a question to see how many people understood this concept in Studio because it can be one of the most confusing areas for new users. It looked as though the majority of the audience were clear… but I ran through some principles to explain the differences. This is also something I have covered twice in different blog articles so the links are here:

Studio… Global or Project Settings?

“Open Document”… or did you mean “Create a single file Project”

Glossaries and the CSV filetype

In this example I showed how to use use two features of Studio… first the CSV filetype and secondly the OpenExchange. So I took a spreadsheet that contained two columns; the first column was complete with English terms suitable for a glossary. The second column was for the translated version of these terms, but it was incomplete. I then saved this spreadsheet as a CSV filetype in MSExcel and opened this file in Studio where the first column is placed into the source side of the Studio Editor and the second column into the target side. I also set the existing translations to appear as confirmed and locked (optional settings). I completed the translation and saved the target file which gave me a fully populated CSV with no missing terms in the target language (although this is not a prerequisite… missing terms won’t prevent the next step).

The next step was to take the Glossary Converter from the OpenExchange and create a MultiTerm Termbase just by dragging and dropping the CSV onto an icon on my desktop…. and back again.

I’ve covered both of these things in the past so here’s a couple of links to more resources on these things… you will find variations on the theme sometimes, but still worth reviewing I think:

A couple of little known gems in SDL Trados Studio

Creating a TM from a Termbase, or Glossary, in SDL Trados Studio

Glossaries made easy…

This is always fun because there are many ways to work with tags in Studio and often users had no idea it was possible at all… so after copying and pasting for months this can be a revelation. I wrote on article specifically on this subject so this should be a good memory jogger:

Simple guide to working with Tags in Studio

This article also covers the different settings before you start that we discussed in the presentation so I think that article is pretty exhaustive and to the point.

A little regex and search and replace

This part of the presentation I based on a question I received the day before at the ATA because it sounded like a really good, and practical, example of some real life problems. In this case the Translator had received an sdlxliff (Studio bilingual file) from another user going from English to French. The document was littered with quotation marks like this:

“some text in here”

Instead of the required format like this:

«some text in here»

So I used a new feature in Studio 2011 SP2 that provides for search and replace using regular expressions. Regular expressions are a bit of a dark art for many users, but learning just a little bit can be a life saver as Studio can use these in many places. I have written a few articles on this already so these are the links:

Regular Expressions – Part 1

Regex… and “economy of accuracy” (Regular Expressions – Part 2)

Search and replace with Regex in Studio – Regular Expressions Part 3

… and on the example I showed on the day. I searched for this in the target… with the regular expressions option checked in the search window:

"([a-zA-Zs]*)"

Then replaced with this:

«$1»

This will find things like this:

“some text” or “text” or “Some Text” or “Text” or “some more Text” etc.

And replace with these:

«some text» or «text» or «Some Text» or «Text» or «some more Text» etc.

The reason for using regular expressions for this instead of just a simple search and replace on the quotes themselves is that you would have to pay careful attention and do this twice, once for the left quote and then again for the right… taking care to click replace and then next to ensure you put them in the right place each time. For a document with a lot of these it would be an error prone and arduous task…with regular expressions it’s a one click process after you get the expression right.

The last of the links, Part 3, covers how to use search and replace specifically so if you’re interested in just this example then maybe just read that one.

Poorly formatted documents, segmentation and the display filter

Phew… I think I covered quite a lot of features in this part of the presentation.

- How to use segmentation rules at a basic level to split numbers from the text

- How to use the display filter… in particular using regular expressions but also some of the preconfigured things such as locked segments

- How to select multiple lines and change the status and lock them

I did write a blog article about this some time ago and also did a video (no sound… but maybe that’s a good thing!) so you can get a good overview of the things I covered here through these resources:

Studio 2011, display filter and freestyle documents

The accompanying youtube video

Watching this back now I think I actually used a different segmentation rule… although it worked for this example and shows how “economy of accuracy” plays a part here. So just in case here’s a screenshot of the settings I used at the ATA which I think are better for this example:

The Package Reader

This part of the presentation took a look at an excellent OpenExchange application written by Quality Translations. You can get this application here:

Package Reader

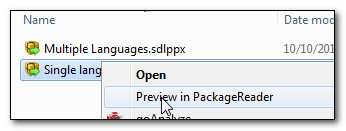

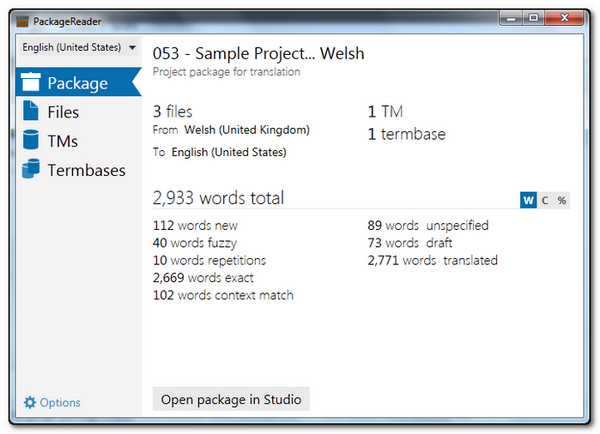

This application allows you to see what’s in a Studio Package, or Return Package simply by right-clicking on the Package in windows explorer.

You can actually see the files, the TMs, the termbases and the analysis all without opening up the Package in Studio at all. So this is great for checking what’s in the Package before accepting a job, or for making sure that your Return Package also includes everything you intended (particularly useful because you cannot open your own Return Package in Studio).

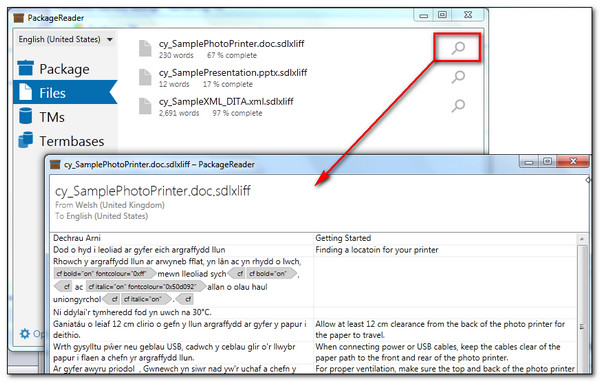

It even allows you to see the content of the files so you can get a flavour of the material before you say yes to the work, or to make sure you included the correct version of a file if you maintain back up copies:

I think this is an excellent example of the type of diverse and useful applications that are developed, and made available by generous developers, on the OpenExchange.

The SDLXLIFF to Legacy Converter

I think I gave a quick demonstration of how to use the Legacy Converter on request from someone in the audience. This is another great tool from the OpenExchange, written by Patrick Hartnett, that you can download from here:

SDLXLIFF to Legacy Converter

The tool allows you to create a TTX/Bilingual Doc(x)/TMX from an sdlxliff, or from a complete Studio Project through the sdlproj file. I surprised myself by realising I can’t find anything we have written about this application (I’m sure we have done)… but I did find this article here by SuperCAT Translation Tutorials that seems to cover the basics well enough:

SDL Studio to Trados Legacy Converter

What it doesn’t cover are some of the things you might not have thought about that make this application even more useful. So for example, you use the Studio display filter to remove number only segments in the Editor View but you don’t want to include them in the analysis either. Studio separates the placeables and tags in the analysis but doesn’t allow you to exclude specific placeables (like numbers) from the analysis itself… see this article for an explanation on the Studio analysis – Understanding SDL Trados Studio text counts.

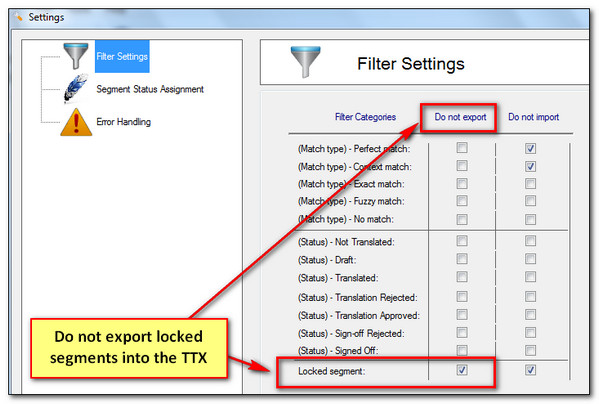

You can use the Legacy Converter to export a TTX that is based on the content of your sdlxliff but excludes locked segments:

Then add the TTX into your Studio Project and analyse it. In terms of wordcount it might be a good workaround for you and could help to resolve wordcount discrepancies between your analysis… good for number only segments but not numbers in general of course.

Summary

We only had an hour for this presentation… and on reflection I think we covered quite a bit. I know we had questions along the way that we also dived into… so if you can think of something I covered on the day and haven’t elaborated on here drop me a note in the comments and I’ll add it in.

Thanks for attending too..! I thoroughly enjoyed the ATA in San Diego where I had the chance to meet many translators I speak to in the forums or via email. The entertainment was also great… not to mention the Halloween festivity around the Hard Rock Hotel we stayed in..!

0 thoughts on “The ATA53 Studio presentation in San Diego…”